Everyone knows the Bulls are really, really far from being Good. They have one of the worst situations in the league when it comes to young or in prime talent on the roster. It was due to this lack of promise for the future and talent in the present, among many other reasons, that Chicago’s former front office were relieved of their duties earlier this month.

I was curious just how far off the Bulls are, though, because it might be useful for setting realistic expectations for what the next lead decision-maker in the Second City can reasonably be expected to pull off and on what timetable. It might also provide us some insight into how the franchise should proceed moving forward.

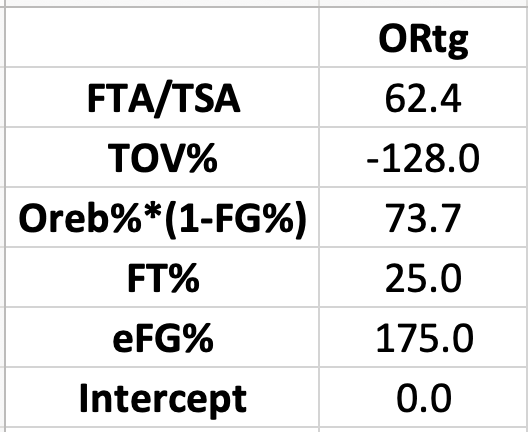

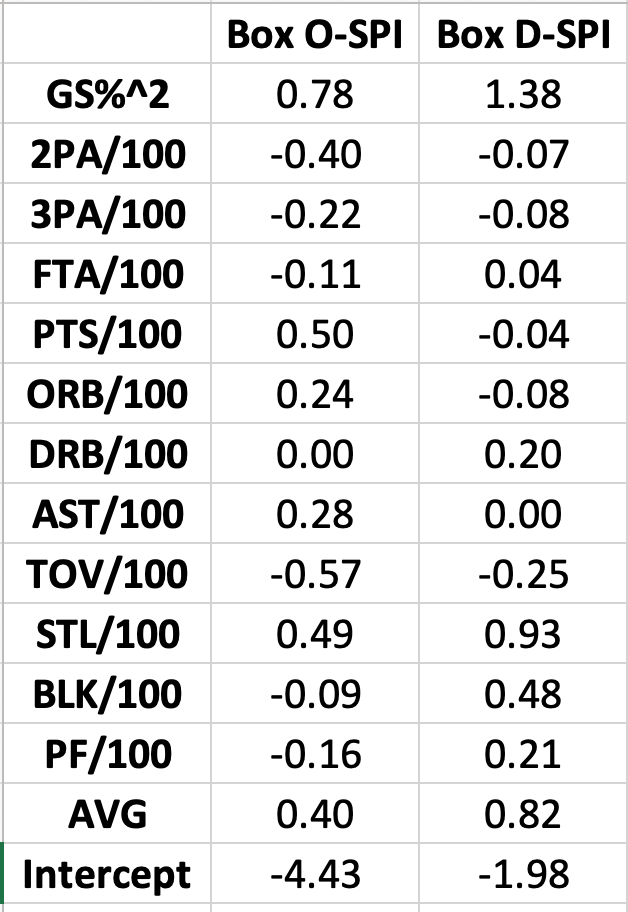

A quick and dirty analysis should suffice for this exercise. To come up with a rough sense of what the Bulls should be aiming for roster-wise, I decided to look into the rough talent levels for teams in the top 4 of opponent-adjusted Net Rating at basketball-reference from 2018-19 through 2024-25. Almost every title winner since the end of the Dynasty Warriors (and the beginning of the NBA’s Parity Era), has been in the top 4 of this pace-adjusted, opponent-adjusted view of team strength, with the lone exception being the 2023 Denver Nuggets. They were 7th, though the gap between them and the 4th place team was just .55 points per 100 possessions. I picked the top 4 teams, because all else equal, they should represent an approximation of which teams are Conference Finals Worthy, which feels like as good a definition for Contending Team as there is to me.

In order to get a sense for the average talent level of those Top 4 Teams, I used tiers based on BPM (excluding any player with less than 500 minutes) and bucketed players into the following buckets:

Top 5Top 6-10 Top 11-24 Top 25-50Top 51-100Top 101-150 Other

The results were as follows:

BPM Bucket | PlayersTop 5 | 0.3Top 6-10 | 0.2Top 11-25 | 1.3Top 26-50 | 1.7Top 51-100 | 2.4Top 101-150 | 2.0

This suggests that to be a Contender in the NBA, you need roughly 8 “plus” players in your rotation (Top 150 BPM cut off translates to roughly +0.0). You want that distributed so that you have at least 2 Top 25 players, 2 players in the Top 26-50 bucket, 2 players in the Top 51-100 bucket, and 2 players in the Top 101-150 bucket. That’s a lot of good players and, obviously, more is better. This is roughly what a target should look like, but if you can do better, clearly, you should. What do those buckets translate to in BPM values?

The average cutoffs over the last 8 seasons (2018-19 through 2024-25) for these buckets are as follows:

BPM Bucket | Avg BPM Cutoff

Top 5 | 7.9

Top 6-10 | 6.2

Top 11-25 | 3.9

Top 26-50 | 2.6

Top 51-100 | 0.9

Top 101-150 | 0.0

So, where do the Bulls stand today with the players on their roster going into 2026-27?

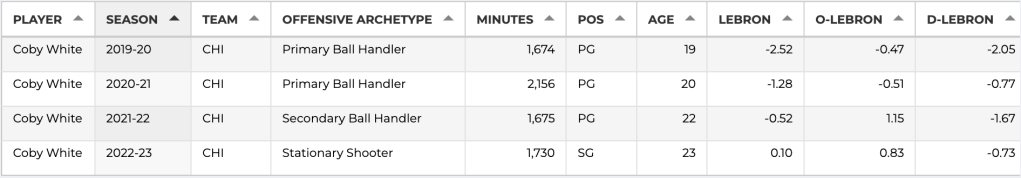

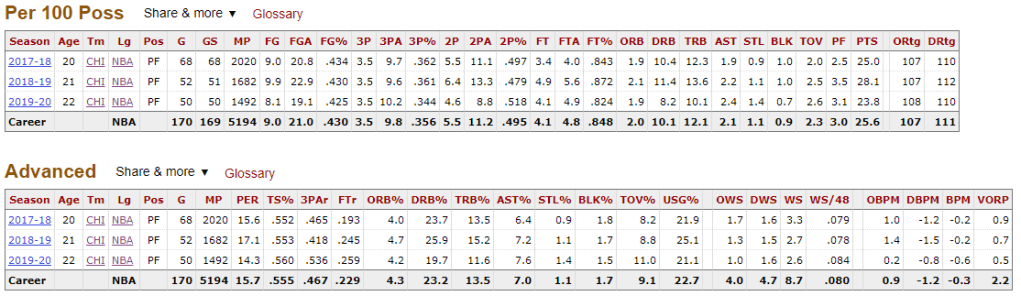

Josh Giddey finished this past season with a +2.7 BPM, which would put him in the Top 26-50 bucket. Tre Jones landed at a +1.3 BPM, which puts him the Top 51-100 bucket. The Bulls do not have a single other player rostered for next year that qualifies as a Top 150 player currently, as measured by BPM.

You can quibble with the use of BPM here and suggest that, maybe, Jalen Smith and his on-off-metric dominance should be on this list. I wouldn’t fight you on it, but even if you suggest that, that’s only 3 top 150 players, when you need EIGHT to be a real contender and you can’t just grab 8 guys in the Top 50 to 150 range and pray (which seemed to be Arturas Karnisovas’s plan), because you need a distribution of high end talent, elite role guys, and then additional depth in the rotation out to eight players.

Also troubling for the Bulls is that 2 of their (arguably) top 150 guys on the roster are smack in the middle of their primes. Tre Jones was 26 this year and Jalen Smith was 25. Both are on cheapo deals and could clearly (as this exercise demonstrates) help a team with real aspirations now. As a result, the Bulls’ next decision-maker should look hard at selling Jones and Smith for value, because the Bulls are too far away from being a contending team to be able to take advantage of Smith and Jones’s goodness and cheapness, so spinning that value forward through the acquisition of draft capital makes the most sense for the long-term health of the franchise.

Such moves would leave Bulls with only one Top 150 player on the whole roster: Josh Giddey. Giddey will be 24 next season and is on a decent contract. He could be part of the next Bulls contender, in theory, given his youth and current goodness. Giddey’s unique play-style, strengths and weaknesses, and poor defense make him a dubious fit with better players, however, so even he might not be worth keeping. I am fine with keeping him for the time being, until the Bulls have a better sense of who their next franchise pillar will be.

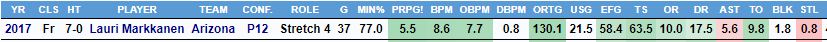

You might be thinking, what about Matas Buzelis? He put up a -1.0 BPM this past season and more advanced metrics weren’t much kinder. Buzelis is, however, very young. Creator of BPM, Daniel Myers provided an aging curve for BPM years ago and looking at that we can see that Buzelis is likely to peak in his age 27 season. If he follows the curve (i.e. isn’t a developmental outlier), he should peak around a +2.1 BPM, which would put him in the Top 51 – 100 bucket. Certainly a good player, but not one you should necessarily view as a franchise cornerstone. Giddey, for his part, should peak near the end of his current contract, at age 27, as roughly a +4.0 BPM (Top 11-25) player. My evaluation of Giddey’s likely peak is much less sanguine than the Top 25 player BPM suggests, due to fit issues and BPM not capturing defensive impact that well.

Leonard Miller is another young piece who seems like he could crack the top 150 players in the league someday. Other than that, the Bulls have the dice roll of Noa Essengue, their 2025 lottery pick, who barely played before a shoulder injury sidelined him for the year. If both of those guys hit as a rotation level players on a Contender, the Bulls would be up to 4 of the needed 8 Top 150 players, with 0 (zero) Top 10 players.

So what does this suggest, strategically, as the best path forward?

All of the players that matter (Giddey, Buzelis) on the roster are young enough that the Bulls are at least a few years from their peaks. The Bulls have a grip of cap space this summer and 2 top 15 first round picks in the heralded 2026 NBA draft. Given their lack of young talent and the relative youth of the promising players they do have, the Bulls should be patient.

Utilizing their cap space to take on bad contracts to acquire draft assets to get more bites at the apple to get truly game changing talents in the draft is the most sound and logical path forward. The Bulls should absolutely not skip steps here. In addition, given the lack of high upside talent on the roster, their draft strategy should be to swing on the highest ceiling prospects available at their given draft slots (likely 9th and 15th). No drafting 24 year old Yaxel Landebourg, please.

There will be a strong temptation to try to rebuild this thing on the fly, given how directionless and bad the Bulls have been for the last decade since the Jimmy Butler Trade. Hopefully this exercise lays out why that would be a mistake. Building a Contending roster on the fly in a single offseason is extremely unlikely when your cupboard is as bare as Chicago’s is currently. Slow and steady should be their mantra.